Light

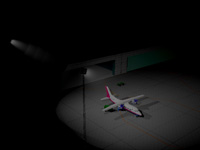

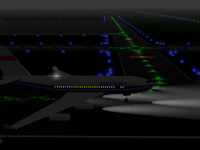

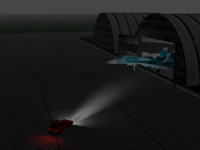

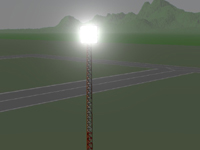

The visualization of lighting effects takes into account the physiology of the human visual system since this is the only correct way to assess the visibility of objects in the scene. To achieve this lighting effects are computed for all of the objects in the scene as they are represented on the human retina and are then converted into the device characteristics.

Based on this concept we were able to develop a model of effect formation of light sources. During the effect formation process the diffusion around the light sources takes into account medium of diffusion. The developed model allows to account for a wide spectrum of atmospheric diffusion phenomena such as diffusion in fog particles, smoke, dust, etc. The model also supports such effects as blinding by intense light sources which is especially important in vehicle simulators.

Aliasing phenomenon is known to appear during the synthesis of light sources. It is manifested in disappearance of some light sources and is especially pronounced during the motion of the observer. We have developed methods that eliminate this effect and decrease image synthesis time.

Shadow

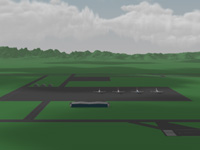

The main problem with synthesis of terrain shadows on large landscapes is the search of the patch of surface that could cast a shadow on the current point of interest. To solve this problem we have developed a set of fast algorithms that locate patches of terrain which potentially cast a shadow onto a point on the terrain or on an artificial object. Synthesis of shadows from terrain includes identifying shadows that fall on the terrain as well as static and dynamic artificial objects in the 3D scene.

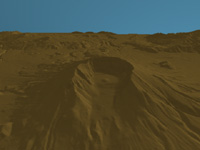

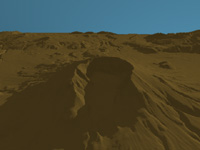

Below you can find sample images of terrain with and without shadows. The elevation of the sun relative to the horizon is 15 degrees, azimuth - 60 degrees.

When synthesizing images with shadows the shadow intensity is determined based on the object's transparency. As a result, shadows of such objects as clouds have a "soft" edge. To demonstrate this effect below are 3 figures of the same scene viewed from different perspectives. In Figure 1, we have a general view of the scene. The landscape is approximately 6x8 miles. The scene contains 10 cloud clusters positioned at the height of 0.6 mile. The observer is positioned at the following heights: fig. 1 - 10.6 miles, fig. 2 - 4 miles, and fig. 3 - 660 feet. Cloud size is about 1x1.3 miles.

1.

2.

3.

Examples of images with shadows:

|